![[Windows Task Manager]](win-7-taskmgr-cpus.gif)

Note: Links in this article which refer to an off-site page (such as a manufacturer) will open in a new browser tab or window (depending on which browser you are using).

First on the list of changes was "lots more disk space". The design goal was to equip each RAIDzilla II with 32TB. Other goals (beyond "use current hardware") were:

After discussing my requirements with CI Design, I again selected a CI Design chassis - the NSR316 - for the RAIDzilla II. Of particular importance was the "no two the same" issue I'd run into with the original RAIDzilla's SR316 chassis - two of them were a couple inches deeper than the third and they had various other internal differences as well. Also, I mentioned to them that I'd had a problem where I couldn't replace the CD-ROM drives that I purchased as part of the chassis with other DVD-ROM drives, due to the chassis cutout being too small for the DVD-ROM drive tray. CI Design assured me that all of that sort of thing was in the past and that the NSR 316 would be consistent across its production run. I decided to place an initial order for 4 chassis rather than ordering them one-at-a-time, "just in case" (no pun intended).

I selected the Supermicro X8DTH-iF, as it supported the latest (at the time) Intel Xeon CPUs, the 5500/5600 family. It also has 7 PCIe x8 slots, so the limitations I encountered when positioning boards in the original RAIDzilla would not be a problem on the new generation. This board also includes Ethernet-based KVM redirection, so I wouldn't have to make do with the serial console port as I did on the original RAIDzilla. I purchased the board without the optional 8-port SAS controller, as I was going to use an add-in controller.

For the CPUs, I selected the Intel Xeon E5520 as I felt it represented the best value for the dollar at the time. It is still a cost-effective processor - there's a jump of $150 to the next one in the series, the E5530. The 5600 family was not yet available in quantity at the time I made this decision and thus was prohibitively expensive.

Unlike every other boxed Intel CPU I've purchased, the E5520 did not come with a heatsink or fan. Instead, I needed to purchase something called Intel Thermal Solution STS100C, which is a very fancy name for a fan.

As I mentioned in my original RAIDzilla article, I'm partial to Kingston memory. I selected what I felt would be the optimum Kingston part for this system, the KVR1333D3D4R9S/8GHA 8GB module. I installed 6 pieces of this memory for a total of 48GB RAM, filling exactly half of the memory slots on the motherboard. The Supermicro manual indicated that this configuration would provide maximum memory performance. The Kingston part was not listed on the Supermicro web site as a tested, compatible memory module. However, when the memory arrived from Kingston, I discovered that these modules were actually built by Hynix, with part number HMT31GR7AFR4C-H9, which is on the Supermicro tested memory list.

In order to connect a tape drive for backup, I used a Dell SAS 5/E card (which is actually a re-badged LSI 1068-series card). This card provides two external SAS connectors.

Later on in the install, I added a 256GB OCZ Z-Drive R2 P84 PCIe RAID SSD card. More on this later in the article.

As in the original RAIDzilla, I'm using FreeBSD as the operating system. This time I started with FreeBSD 8. This version provided a reasonably up-to-date implementation of the ZFS filesystem without being on the bleeding edge of FreeBSD development (which eventually became FreeBSD 9.x in January, 2012). I've been tracking 8-STABLE (currently based on 8.3) since then, and this version achieved ZFS "feature parity" with the FreeBSD development branch some time ago.

Go into detail here about some/all ports, samba / aio module, etc.

![[Windows Task Manager]](win-7-taskmgr-cpus.gif)

While building and testing the first batch of RAIDzilla II's, I discovered a number of things. This section discusses some of the more memorable items.

OCZ's technical support has been excellent and I've always received replacement boards quickly. The Z-Drive R2 has reached end-of-life status and warranty replacements will be with some other model of drive. This is complicated by the fact that I'm using FreeBSD, which doesn't have a driver for most of OCZ's newer PCIe drives, which use OCZ's SuperScale controller. OCZ tech support was very accommodating and replaced the failed drive with a 320GB VeloDrive which uses the LSI SAS 2004 controller, which is supported under FreeBSD.

After tuning the performance of the ZFS pool, the pool is actually faster than the SSD - the OCZ Z-Drive R2 only has a burst write speed of 500MB/sec (sustained rate of 250MB/sec), while the disk-based ZFS pool has a sustained write speed of 600MB/sec or so. However, the system still benefits from having a separate ZIL device, so I'm leaving the SSD cards in the RAIDzillas. It does mean that a future SSD failure won't have a significant impact on the system's performance. The VeloDrive units that I've been getting as warranty replacements are about twice as fast as the Z-Drive R2 units.

First, I had to get up to speed on the relationship between I2C, SMBus and PMBus. I2C is the physical interface that the 2 buses use. SMBus is the general system management bus for monitoring voltages, fan speeds and so on. Since the remote management feature of this motherboard supports IPMI (a standardized way to access this data) I did not need to worry about collecting and decoding this information - I could just query the remote management interface for it. PMBus was a whole other story - there is apparently no standard location for this and the Supermicro remote management has no idea how to collect the data from a CI Design (actually 3Y Power - see below) power supply. This was going to get interesting...

Next, I needed to confirm that the power supplies in the NSR316 actually supported PMBus and whether or not one of the loose power supply connectors was actually compatible with the header I'd found on the Supermicro motherboard. I called CI Design tech support and asked them, and got a "We don't know - we'll check and get back to you" answer (to be fair, it was just before closing time at CI Design and they'd have to contact their Asian branch to get the data). I thanked them and started researching things on my own. Pulling out one of the hot-swap power supplies, I determined it was actually a 3Y Power YH5821-1ACR with two YM-2821A supplies installed and was able to determine from the datasheets that the connector I'd found was indeed the PMBus connector, with the same pinout as the motherboard was expecting. Easy - plug it in and instant monitoring! Or...

The Supermicro remote management interface didn't show any PMBus information for the power supplies, even with the connector plugged in. I started searching for information in various places - the Supermicro knowledge base, Linux SMBus drivers and so on. Finally, I found an advertisement for rackmount servers from a well-known provider of FreeBSD-based hardware, and that ad listed PMBus as one of the supported items. I wrote to them and explained that I was building my own systems, but was very interested in their PMBus support and would be willing to pay to obtain the software they used to interface the PMBus to FreeBSD. I got a quick response of "We'll check and get back to you", followed a day or two later by a sheepish "We found that the hardware supports PMBus but we don't monitor it - sorry". Back to square one...

Armed with enough information to be dangerous, I started using ipmitool to poke around the SMBus to try to find the power supplies. During this experimentation, I managed to hang the system, turn off the fans, and generally cause havoc on the SMBus. Fortunately, a hard power cycle would clear up any of these. I finally located the power supplies and was able to collect some product data from them, as shown here:

ipmitool i2c bus=3 0xa0 0x40 0x0 01 00 00 00 01 08 00 f6 01 07 00 d3 33 59 20 50 4f 57 45 52 c8 59 4d 2d 32 38 32 31 41 d1 4f 4d 45 47 41 38 32 31 41 4d 50 32 30 30 52 31 34 07 d9 30 30 d2 30 30 30 32 30 30 30 35 30 38 30 39 starting at 0x0c - "3Y POWER?YM-2821AMP200R14??00?000200050809" ipmitool i2c bus=3 0xa0 0x40 0x40 30 30 30 33 31 38 c0 c0 c1 9d 00 02 1e 94 4c d0 03 03 e8 3c 0a 28 23 90 33 50 46 20 67 2f 3f 12 1e e8 49 34 03 00 01 01 02 13 04 e6 01 b0 04 8c 04 d4 04 96 00 d0 07 a4 ce 01 02 13 ab 3f 80 f4 "000318"I'd located the fixed product data, but not the live monitoring data, when I noticed that Supermicro had released a Java-based utility named SMCIPMITool, which (among other things) claimed to support PMBus. Experimenting with it, I found that (like ipmitool) I had to specify some arcane values in order for it to find my power supplies. It turns out that the commands are "pminfo 7 b0" for the first power supply and "pminfo 7 b2" for the second power supply. With those commands, I am able to retrieve a reasonable amount of information from the power supplies, for example:

Item | Value ---- | ----- Status | [STATUS OK](00h) AC Input Voltage | 0.0 V AC Input Current | 0.0 A DC 12V Output Voltage | 12.13 V DC 12V Output Current | 15.5 A Temperature 1 | 47C/117F Temperature 2 | 23C/73F Fan 1 | 4200 RPM Fan 2 | 4200 RPM DC 12V Output Power | 184 W AC Input Power | 196 W PMBus Revision | 0x0011 PWS Serial Number | PWS Module Number | PWS Revision |Note that some of the data reports as "0.0" and some simply isn't present. This is probably due to the SMCIPMITool utility expecting a Supermicro power supply.

I was surprised to see that the newer motherboard was slightly different, with some connectors unpopulated (most obvious was the 2nd serial port, COM2). I went ahead and installed it anyway, and was surprised to find that it also reported a different set of sensors than the first board. In particular, while the first board reported "Not available" for the temperature of each memory module (expected, as the test memory didn't have thermal sensors), the second board didn't even show that - it was as if the board didn't know that there might be sensors there.

I contacted Supermicro support, who were quite helpful. They told me to re-flash the IPMI (remote management) firmware and select the "reset to defaults" option when flashing. I wasn't particularly happy with needing to re-enter the whole IPMI configuration, but went along. After re-flashing, the memory temperature sensors were now being reported properly.

This does point out one problem with Supermicro's BIOS and IPMI update procedures, though. The BIOS upgrade forcibly resets the configuration to the default values. If I manually flash the BIOS instead of using the provided batch file and specify that the configuration should be retained, the system will POST under the new BIOS but then complain that the settings are corrupt and that it is loading defaults. As there are something like 12 pages of configuration options, this is quite annoying. The IPMI instructions also say to use the "reset to defaults" option. Fortunately, I haven't had to use that option once I performed the initial IPMI firmware update - subsequent updates have worked properly without needing the reset.

The actual flashing of the various components (including the 3Ware controller) is quite good overall - the IPMI and the 3Ware firmware can be updated via their respective web interfaces. The BIOS has to be flashed using a bootable USB stick with MS-DOS on it, which I plug into one of the USB ports on the front of the RAIDzilla II.

I thought about redesigning the storage pool from scratch, using 4 raidz1 vdevs with 4 drives each. In theory, that would give me the same amount of data space I have now, but 25% higher disk I/O performance. Currently there are 3 5-drive raidz1 volumes and 1 spare, giving 12 drives worth of usable space (a raidz1 is similar to RAID 5, requiring one additional drive). The new layout would also have 12 usable drives, so I'd be getting higher performance out of the same hardware, and since autoreplace doesn't do anything, not giving up any reliability. However, benchmark tests didn't show much improvement with the redesigned layout. Apparently ZFS prefers vdevs of size 2^n+l, where l is the RAIDZ level (RAIDZ1, RAIDZ2, or RAIDZ3). Thus, my 5-drive vdevs were optimal. Also, if the servers were in a remote data center where I wouldn't be able to swap a failed drive within a few hours, the current layout is better as I could SSH in to the server and manually perform a replacement of the failed drive in the pool with the hot spare without needing to physically visit the site.

I removed the power supply sleeve and wiring harness (which is a major project as it involves removing the rear sub-frame from the chassis and nearly two dozen screws) and carefully drilled a row of holes across the top of the power supply sleeve where the upper power supply has its air inlet vents. I also added holes on the right side of the sleeve to provide more airflow to the lower power supply.

While I had the power supply out, I took the opportunity to clean up the wiring harness by removing unused connectors and their associated cables from the harness. I also relocated the motherboard power and PMBus cable loops to provide better airflow, as you can see in the picture below.

The power supplies still report excessively high temperatures to my monitoring software, but they do feel much cooler to the touch than RAIDzilla II's with unmodified power supplies.

Some people may ask why I didn't just switch to a different power supply with better ventilation. Unfortunately, the wiring harness from the power supply to the rest of the components has been heavily modified by CI Design, so alternatives such as the Zippy G1W2-5860V3V won't be a drop-in replacement.

I once joked to a friend of mine that while the Backblaze Pod philosophy was "how little can you spend", the RAIDzilla II philosophy was "how much can you spend". While that isn't completely true (the current cost of a drive-less 'zilla II is only a little above $3000 as of February, 2013), the Pod definitely packs more storage into a given space than the RAIDzilla II, and thus represents an ideal answer to Backblaze's needs.

I wrote up a more detailed comparison which you can read here.

Second, I wanted to be able to re-use the entire RAIDzilla (except for the drives) for many years to come. There's no guarantee that there will be upgrade CPUs in the E5500 / E5600 series available in the future, nor this type of memory. So the idea was to build a system in the maximum configuration and be able to use it for at least 10 years, with a mid-life replacement of the 2TB drives wth 4TB or 6TB models. This is approximately twice the usable life I obtained from the original RAIDzillas, though all 3 of those are still operating, providing excellent service [and low storage capacity!] for a friend of mine.

I do expect the price of 10Gb Ethernet cards to come down over the next few years (this has already been happening since I built the first RAIDzilla II), so I may re-visit this in a few years.

As of April, 2013 it is possible to purchase the Intel X540-T1 10GbE adapter (1 copper RJ-45 port) for around $350, and the Netgear XS708E 8-port 10GbE switch for a little over $800. I am in the process of converting my RAIDzilla II's to 10GbE and will update this article with performance results at some point. Simple tests with iperf are encouraging, showing over 4Gbit/sec with a single connection and 9.88Gbit/sec using three connections.

This is probably a case of "great minds think alike", but it would be amusing if instead it was "imitation is the sincerest form of flattery", particularly since the Unitrends box sells for $45,000 (according to the article in The Register).

Originally Posted by www.blu-ray.comWe are extremely sad to let you know that we've experienced 7 weeks of database loss. 7 weeks ago we moved to a new much improved server, but unfortunately earlier today the hard drives of the database crashed (was using RAID). On the old server we did daily backups, but since we changed server and setup, the old server backup solution didn't work anymore. We have been discussing the new backup system on a daily basis, but hadn't yet implemented it, so the timing couldn't have been worse.

What is missing the last 7 weeks

DVD database additions/updates

All products except Blu-ray movies

Price trackings

Collection updates

HT Gallery updates

Movie ratings

Movie reviews

All forum activity

New user registrations

Cast & crew

Any other database data other than mentioned below

Fortunately, they were eventually able to recover almost all of their data (apparently the forum posts were one of the unrecoverable items). But it took them two weeks to recover to that point. If this was your business and not an enthusiast site, do you think you would still be in business after two weeks of not being able to access your vital business information?

Please Note: I am NOT trying to single out or pick on blu-ray.com here - they were just honest and forthcoming enough to put their experiences up on their site for the world to read and learn from. This sort of thing happens all the time to all sorts of organizations and is often not disclosed to the public, instead ending up in a footnote to a Form 10-Q SEC report.

* Note: This isn't actually what the ostrich does - it puts its head and neck on the sand and then sits down, the idea being that it will thus look like a bush and be able to hide.

This particular TL4000 has a usable capacity of 35.2TB (uncompressed). It dedicates one slot to a cleaning cartridge and three other slots to loading / unloading media, so there are 44 usable slots, each holding an 800GB tape. Unlike some other drive types, modern LTO drives have a very good compression policy - if the data doesn't get smaller when compressed, it writes it out as uncompressed. This choice is made on a (tape) block-by-block basis, so you get the best possible tape capacity. Some competing formats will actually use more tape when storing uncompressable data when compression is enabled, due to the overhead of the decompression table.

I also ordered a bunch of Imation DataGuard tape storage / transport cases which hold 20 LTO tapes per case, used to transport and store tapes for offsite backup, as well as the barcode labels needed for the library to identify which tape is loaded.

I installed a RAIDzilla II at that offsite location and synchronize with it nightly over dedicated Gigabit Ethernet fiber, so the synchronization happens just as rapidly as a local synchronization on the same LAN would. For historical reasons I use rdiff-backup to synchronize the systems. The way I have it configured, I can instantly access a copy of the data as of the last nightly synchronization run, and also have access to all data that changed (added, deleted, modified) over the past 30 days. It meets my needs, so I never really investigated alternatives. If I were starting from scratch, I'd investigate the FreeBSD HAST (Highly Available STorage) facility.

dump -0uaL -C 32 -b 32 -f /dev/nsa0 /dataBut instead of getting data moving happily to tape, I was greeted with the error message:

dump: /data: unknown file systemHow unpleasant. On thinking it over, I realized that dump only works on UFS / UFS2 filesystems. Thus started the search for a dump replacement that supported ZFS.

My first thought was to ask other FreeBSD users what they were using. I started a discussion thread titled "Backup solution for ginormous ZFS pool?" and waited for responses. What I got were mostly comments that ZFS didn't need to be backed up, or that a copy of the data on another ZFS server (which I was already doing with my offsite system) would be sufficient.

I was being stubborn, since I already had the tape library, drives and storage cases. One of the possible solutions mentioned was the AMANDA backup software, which has a pair of desirable features: it is free, and it runs on FreeBSD. Unfortunately, it has a very complicated configuration - it is mostly targeted at an environment where multiple systems do backups to a central storage host and then trigger (or wait for) an AMANDA backup job to run. Since I was actually trying to use less functionality, I figured it would be easier. It was still quite complicated, and rather than continue fighting with it, I decided to ask a number of the companies providing commercial AMANDA support for a quote for some consulting help in setting it up. The responses I got fell into 3 categories:

gtar cvMbf 8192 /dev/nsa0 /dataI might investigate alternatives in the future, but for now gtar is working fine. The only features it is missing that I care about are automatic tape loading of the next tape and being able to restart from the beginning of a tape in case of an I/O error.

Once the backup is complete, I remove its tapes from the library and package them up in a DataGuard case (the biggest backup so far has been 17 tapes which fits nicely in a 20-tape case), place a tamper-evident seal on the case and schedule a pickup to take the case to a secure offsite storage facility in another state.

| Apr 2010 | Jul 2011 | Feb 2013 | |||||||||

| Part Number | Manufacturer | Qty. | Price (each) | Price (total) | Note(s) | Price (each) | Price (total) | Note(s) | Price (each) | Price (total) | Note(s) |

| NSR 316 | CI Design | 1 | $920 | $920 | $920 | $920 | [1] | $920 | $920 | [1] | |

| X8DTH-iF | Supermicro | 1 | $464 | $464 | $450 | $450 | $245 | $245 | [2] | ||

| E5520 | Intel | 2 | $380 | $760 | $400 | $800 | $75 | $150 | [3] | ||

| STS100C | Intel | 2 | $35 | $70 | $35 | $70 | $32 | $64 | [2] | ||

| KVR1333D3D4R9S/8GHA | Kingston | 6 | $501 | $3006 | $170 | $1020 | $68 | $408 | [2] | ||

| OCZSSDPX-ZD2P84256G | OCZ Technology | 1 | $0 | $0 | [4] | $1200 | $1200 | $1200 | $1200 | [1] | |

| 9650SE-16ML | 3Ware | 1 | $900 | $900 | $800 | $800 | $780 | $780 | |||

| BBU-MODULE-04 | 3Ware | 1 | $60 | $60 | $100 | $100 | $124 | $124 | [2] | ||

| CBL-SFF8087-05M | 3Ware | 4 | $15 | $60 | $15 | $60 | $10 | $40 | [2] | ||

| DL-8ATS | LITEON | 1 | $40 | $40 | [5] | $50 | $50 | $50 | $50 | [1] | |

| SAS 5/E | Dell | 1 | $85 | $85 | [3] | $75 | $75 | [2] | $50 | $50 | [2] |

| WD3200BEKT | Western Digital | 2 | $73 | $146 | $55 | $110 | $60 | $120 | |||

| WD2003FYYS | Western Digital | 16 | $373 | $5968 | $220 | $3520 | $195 | $3120 | |||

| Miscellaneous | Cables / labels / etc. | 1 | $50 | $50 | $50 | $50 | $50 | $50 | |||

| Total Cost | $12529 | $9225 | $7321 | ||||||||

Each of the images is clickable to display a higher-resolution version.

A pair of RAIDzilla II's, rack-mounted. The gray "ears" on either side are reducers, as the RAIDzilla II is a 19" device while the cabinet is a 23" (telco standard) rack. The green LEDs on each hot-swap drive carrier indicate "installed and no errors", while the blue LEDs indicate drive activity (the top right drive doesn't have its blue LED lit as it is the "hot" spare). The LEDs to the right of the blue ones (which appear blue in this photograph) are red, and only illuminate when there is a drive error, or when the drives are reset as part of the controller's BIOS initialization.

This is an annotated view of the internals of the RAIDzilla II. The major components are numbered as follows:

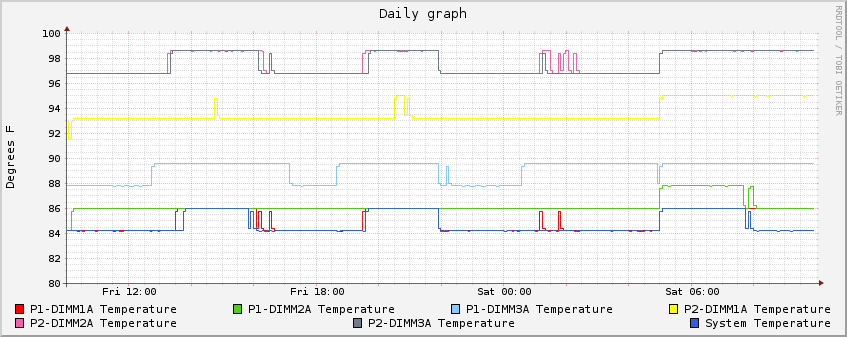

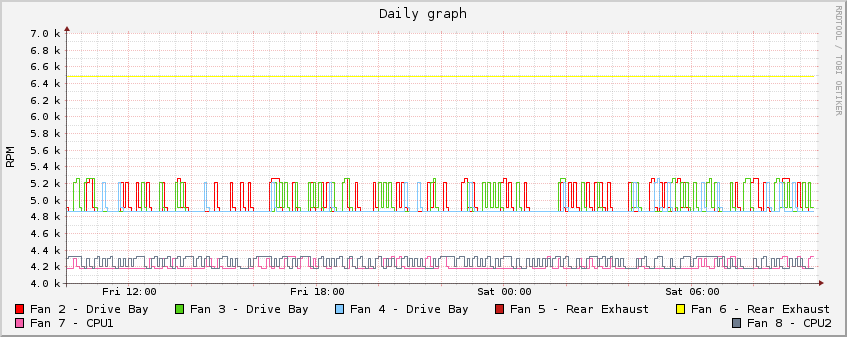

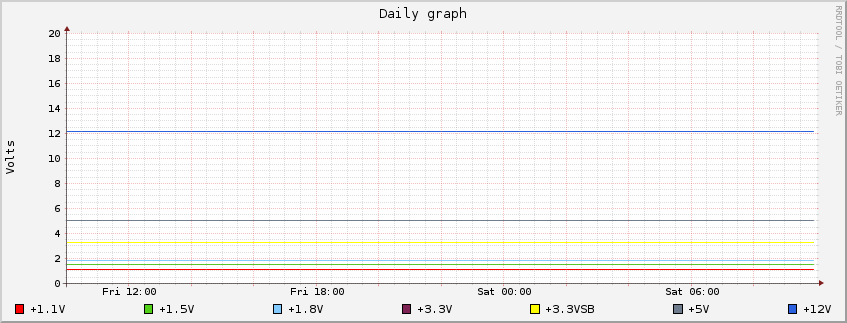

In a room with an ambient temperature of 72°F, the disk drives have temperatures ranging from 76°F to 78°F. The RAID controller battery backup module is 77°F. The CPUs and memory modules run somewhat warmer; the memory temperatures range from 82°F to 95°F - still well within the normal operating range.

This is a close-up view of the modified power supply cooling and cabling layout. Vent holes have been drilled on the top of the power supply sleeve (and on the side, not visible in this picture) to allow the upper power supply's fans to actually pull in cool air. The metal stripes you can see on the right of the holes are the air inlet vents on the upper power supply module. Though it's hard to make out in this picture, there's a gap of about ¼" between the circuit board on the left of the holes and the top of the power supply sleeve.

Also, the wiring has been neatened substantially - a large bundle of unused connectors was disconnected from the harness and removed, and the slack loops for the motherboard power cables and PMBus cables have been relocated to further improve airflow.

As I mentioned earlier, I wanted to be able to monitor all of the RAIDzilla sensors from a central management station. The following section shows a snapshot of the data as displayed on my management station. The data shown here is static and dates from the time this web page was first created. Additionally, the "Links" section has had the hotlinks (to the live system) removed and only the daily graphs are shown. The actual management station has additional graphs for weekly, monthly and yearly data.

Sensor name Value Type Stat Low Irr. Low Crit Low Warn High Warn High Crit High Irr. ---------------- ---------- ---------- ----- --------- -------- -------- --------- --------- --------- System Temp | 28.000 | degrees C | ok | -9.000 | -7.000 | -5.000 | 75.000 | 77.000 | 79.000 CPU1 Vcore | 1.064 | Volts | ok | 0.808 | 0.816 | 0.824 | 1.352 | 1.360 | 1.368 CPU2 Vcore | 1.048 | Volts | ok | 0.808 | 0.816 | 0.824 | 1.352 | 1.360 | 1.368 CPU1 VTT | 1.104 | Volts | ok | 0.808 | 0.816 | 0.824 | 1.512 | 1.520 | 1.528 CPU2 VTT | 1.128 | Volts | ok | 0.808 | 0.816 | 0.824 | 1.512 | 1.520 | 1.528 CPU1 DIMM | 1.512 | Volts | ok | 1.288 | 1.296 | 1.304 | 1.656 | 1.664 | 1.672 CPU2 DIMM | 1.512 | Volts | ok | 1.288 | 1.296 | 1.304 | 1.656 | 1.664 | 1.672 +1.5V | 1.512 | Volts | ok | 1.320 | 1.328 | 1.336 | 1.656 | 1.664 | 1.672 +1.8V | 1.824 | Volts | ok | 1.592 | 1.600 | 1.608 | 1.976 | 1.984 | 1.992 +5V | 5.056 | Volts | ok | 4.416 | 4.448 | 4.480 | 5.536 | 5.568 | 5.600 +12V | 12.137 | Volts | ok | 10.600 | 10.653 | 10.706 | 13.250 | 13.303 | 13.356 +1.1V | 1.104 | Volts | ok | 0.960 | 0.968 | 0.976 | 1.216 | 1.224 | 1.232 +3.3V | 3.240 | Volts | ok | 2.880 | 2.904 | 2.928 | 3.648 | 3.672 | 3.696 +3.3VSB | 3.288 | Volts | ok | 2.880 | 2.904 | 2.928 | 3.648 | 3.672 | 3.696 VBAT | 3.264 | Volts | ok | 2.880 | 2.904 | 2.928 | 3.648 | 3.672 | 3.696 Fan1 | na | RPM | na | 405.000 | 540.000 | 675.000 | 34155.000 | 34290.000 | 34425.000 Fan2 | 4860.000 | RPM | ok | 405.000 | 540.000 | 675.000 | 34155.000 | 34290.000 | 34425.000 Fan3 | 4860.000 | RPM | ok | 405.000 | 540.000 | 675.000 | 34155.000 | 34290.000 | 34425.000 Fan4 | 4860.000 | RPM | ok | 405.000 | 540.000 | 675.000 | 34155.000 | 34290.000 | 34425.000 Fan5 | 6480.000 | RPM | ok | 405.000 | 540.000 | 675.000 | 34155.000 | 34290.000 | 34425.000 Fan6 | 6480.000 | RPM | ok | 405.000 | 540.000 | 675.000 | 34155.000 | 34290.000 | 34425.000 Fan7 | 4185.000 | RPM | ok | 405.000 | 540.000 | 675.000 | 34155.000 | 34290.000 | 34425.000 Fan8 | 4320.000 | RPM | ok | 405.000 | 540.000 | 675.000 | 34155.000 | 34290.000 | 34425.000 P1-DIMM1A Temp | 28.000 | degrees C | ok | -9.000 | -7.000 | -5.000 | 80.000 | 85.000 | 90.000 P1-DIMM2A Temp | 29.000 | degrees C | ok | -9.000 | -7.000 | -5.000 | 80.000 | 85.000 | 90.000 P1-DIMM3A Temp | 30.000 | degrees C | ok | -9.000 | -7.000 | -5.000 | 80.000 | 85.000 | 90.000 P2-DIMM1A Temp | 33.000 | degrees C | ok | -9.000 | -7.000 | -5.000 | 80.000 | 85.000 | 90.000 P2-DIMM2A Temp | 35.000 | degrees C | ok | -9.000 | -7.000 | -5.000 | 80.000 | 85.000 | 90.000 P2-DIMM3A Temp | 35.000 | degrees C | ok | -9.000 | -7.000 | -5.000 | 80.000 | 85.000 | 90.000

PS A Status Item | Value ---- | ----- DC 12V Output Voltage | 11.85 V DC 12V Output Current | 16.5 A Temperature 1 | 46C/115F Temperature 2 | 29C/84F Fan 1 | 5000 RPM Fan 2 | 4896 RPM DC 12V Output Power | 195 W AC Input Power | 235 W PMBus Revision | 0x0011 PS B Status Item | Value ---- | ----- DC 12V Output Voltage | 12.1 V DC 12V Output Current | 13.5 A Temperature 1 | 66C/151F Temperature 2 | 29C/84F Fan 1 | 4600 RPM Fan 2 | 4600 RPM DC 12V Output Power | 163 W AC Input Power | 201 W PMBus Revision | 0x0011

No. Date Time Message ---- ---------- -------- ---------------------------------------------------------------------------------------------- SEL has no entries

For the computer geeks out there, this is a "dmesg" output of the system booting up, listing the installed hardware:

Copyright (c) 1992-2013 The FreeBSD Project.

Copyright (c) 1979, 1980, 1983, 1986, 1988, 1989, 1991, 1992, 1993, 1994

The Regents of the University of California. All rights reserved.

FreeBSD is a registered trademark of The FreeBSD Foundation.

FreeBSD 8.4-PRERELEASE #0 r250981M: Sat May 25 09:48:16 EDT 2013

terry@rz1.glaver.org:/usr/obj/usr/src/sys/RAIDZILLA2 amd64

gcc version 4.2.1 20070831 patched [FreeBSD]

Timecounter "i8254" frequency 1193182 Hz quality 0

CPU: Intel(R) Xeon(R) CPU E5520 @ 2.27GHz (2275.82-MHz K8-class CPU)

Origin = "GenuineIntel" Id = 0x106a5 Family = 6 Model = 1a Stepping = 5

Features=0xbfebfbff<FPU,VME,DE,PSE,TSC,MSR,PAE,MCE,CX8,APIC,SEP,MTRR,PGE,MCA,CMOV,PAT,PSE36,CLFLUSH,DTS,ACPI,MMX,FXSR,SSE,SSE2,SS,HTT,TM,PBE>

Features2=0x9ce3bd<SSE3,DTES64,MON,DS_CPL,VMX,EST,TM2,SSSE3,CX16,xTPR,PDCM,DCA,SSE4.1,SSE4.2,POPCNT>

AMD Features=0x28100800<SYSCALL,NX,RDTSCP,LM>

AMD Features2=0x1<LAHF>

TSC: P-state invariant

real memory = 51543801856 (49156 MB)

avail memory = 49690439680 (47388 MB)

ACPI APIC Table: <SUPERM APIC1635>

FreeBSD/SMP: Multiprocessor System Detected: 16 CPUs

FreeBSD/SMP: 2 package(s) x 4 core(s) x 2 SMT threads

cpu0 (BSP): APIC ID: 0

cpu1 (AP): APIC ID: 1

cpu2 (AP): APIC ID: 2

cpu3 (AP): APIC ID: 3

cpu4 (AP): APIC ID: 4

cpu5 (AP): APIC ID: 5

cpu6 (AP): APIC ID: 6

cpu7 (AP): APIC ID: 7

cpu8 (AP): APIC ID: 16

cpu9 (AP): APIC ID: 17

cpu10 (AP): APIC ID: 18

cpu11 (AP): APIC ID: 19

cpu12 (AP): APIC ID: 20

cpu13 (AP): APIC ID: 21

cpu14 (AP): APIC ID: 22

cpu15 (AP): APIC ID: 23

ioapic0 <Version 2.0> irqs 0-23 on motherboard

ioapic1 <Version 2.0> irqs 24-47 on motherboard

ioapic2 <Version 2.0> irqs 48-71 on motherboard

ichwd module loaded

kbd1 at kbdmux0

acpi0: <SMCI > on motherboard

acpi0: Overriding SCI Interrupt from IRQ 9 to IRQ 20

acpi0: [ITHREAD]

acpi0: Power Button (fixed)

acpi0: reservation of 400, 100 (3) failed

Timecounter "ACPI-safe" frequency 3579545 Hz quality 850

acpi_timer0: <24-bit timer at 3.579545MHz> port 0x808-0x80b on acpi0

cpu0: <ACPI CPU> on acpi0

cpu1: <ACPI CPU> on acpi0

cpu2: <ACPI CPU> on acpi0

cpu3: <ACPI CPU> on acpi0

cpu4: <ACPI CPU> on acpi0

cpu5: <ACPI CPU> on acpi0

cpu6: <ACPI CPU> on acpi0

cpu7: <ACPI CPU> on acpi0

cpu8: <ACPI CPU> on acpi0

cpu9: <ACPI CPU> on acpi0

cpu10: <ACPI CPU> on acpi0

cpu11: <ACPI CPU> on acpi0

cpu12: <ACPI CPU> on acpi0

cpu13: <ACPI CPU> on acpi0

cpu14: <ACPI CPU> on acpi0

cpu15: <ACPI CPU> on acpi0

pcib0: <ACPI Host-PCI bridge> port 0xcf8-0xcff on acpi0

pci0: <ACPI PCI bus> on pcib0

pcib1: <ACPI PCI-PCI bridge> at device 1.0 on pci0

pci1: <ACPI PCI bus> on pcib1

igb0: <Intel(R) PRO/1000 Network Connection version - 2.3.9 - 8> port 0xcc00-0xcc1f mem 0xfaee0000-0xfaefffff,0xfaec0000-0xfaedffff,0xfae9c000-0xfae9ffff irq 28 at device 0.0 on pci1

igb0: Using MSIX interrupts with 9 vectors

igb0: Ethernet address: 00:25:90:01:25:70

igb0: [ITHREAD]

igb0: Bound queue 0 to cpu 0

igb0: [ITHREAD]

igb0: Bound queue 1 to cpu 1

igb0: [ITHREAD]

igb0: Bound queue 2 to cpu 2

igb0: [ITHREAD]

igb0: Bound queue 3 to cpu 3

igb0: [ITHREAD]

igb0: Bound queue 4 to cpu 4

igb0: [ITHREAD]

igb0: Bound queue 5 to cpu 5

igb0: [ITHREAD]

igb0: Bound queue 6 to cpu 6

igb0: [ITHREAD]

igb0: Bound queue 7 to cpu 7

igb0: [ITHREAD]

igb1: <Intel(R) PRO/1000 Network Connection version - 2.3.9 - 8> port 0xc800-0xc81f mem 0xfae20000-0xfae3ffff,0xfae00000-0xfae1ffff,0xfaddc000-0xfaddffff irq 40 at device 0.1 on pci1

igb1: Using MSIX interrupts with 9 vectors

igb1: Ethernet address: 00:25:90:01:25:71

igb1: [ITHREAD]

igb1: Bound queue 0 to cpu 8

igb1: [ITHREAD]

igb1: Bound queue 1 to cpu 9

igb1: [ITHREAD]

igb1: Bound queue 2 to cpu 10

igb1: [ITHREAD]

igb1: Bound queue 3 to cpu 11

igb1: [ITHREAD]

igb1: Bound queue 4 to cpu 12

igb1: [ITHREAD]

igb1: Bound queue 5 to cpu 13

igb1: [ITHREAD]

igb1: Bound queue 6 to cpu 14

igb1: [ITHREAD]

igb1: Bound queue 7 to cpu 15

igb1: [ITHREAD]

pcib2: <ACPI PCI-PCI bridge> at device 3.0 on pci0

pci3: <ACPI PCI bus> on pcib2

ix0: <Intel(R) PRO/10GbE PCI-Express Network Driver, Version - 2.5.8> mem 0xf8e00000-0xf8ffffff,0xf8dfc000-0xf8dfffff irq 24 at device 0.0 on pci3

ix0: Using MSIX interrupts with 9 vectors

ix0: [ITHREAD]

ix0: [ITHREAD]

ix0: [ITHREAD]

ix0: [ITHREAD]

ix0: [ITHREAD]

ix0: [ITHREAD]

ix0: [ITHREAD]

ix0: [ITHREAD]

ix0: [ITHREAD]

ix0: Ethernet address: a0:36:9f:1d:65:c2

ix0: PCI Express Bus: Speed 5.0Gb/s Width x8

ix0: link state changed to UP

pcib3: <ACPI PCI-PCI bridge> at device 5.0 on pci0

pci5: <ACPI PCI bus> on pcib3

pcib4: <ACPI PCI-PCI bridge> at device 7.0 on pci0

pci6: <ACPI PCI bus> on pcib4

mps0: <LSI SAS2004> port 0xd800-0xd8ff mem 0xfbcf0000-0xfbcfffff irq 30 at device 0.0 on pci6

mps0: Firmware: 16.00.00.00, Driver: 14.00.00.01-fbsd

mps0: IOCCapabilities: 185c<ScsiTaskFull,DiagTrace,SnapBuf,EEDP,TransRetry,IR>

mps0: [ITHREAD]

pcib5: <ACPI PCI-PCI bridge> at device 9.0 on pci0

pci7: <ACPI PCI bus> on pcib5

pci0: <base peripheral, interrupt controller> at device 20.0 (no driver attached)

pci0: <base peripheral, interrupt controller> at device 20.1 (no driver attached)

pci0: <base peripheral, interrupt controller> at device 20.2 (no driver attached)

pci0: <base peripheral, interrupt controller> at device 20.3 (no driver attached)

pci0: <base peripheral> at device 22.0 (no driver attached)

pci0: <base peripheral> at device 22.1 (no driver attached)

pci0: <base peripheral> at device 22.2 (no driver attached)

pci0: <base peripheral> at device 22.3 (no driver attached)

pci0: <base peripheral> at device 22.4 (no driver attached)

pci0: <base peripheral> at device 22.5 (no driver attached)

pci0: <base peripheral> at device 22.6 (no driver attached)

pci0: <base peripheral> at device 22.7 (no driver attached)

uhci0: <Intel 82801JI (ICH10) USB controller USB-D> port 0xaf80-0xaf9f irq 16 at device 26.0 on pci0

uhci0: [ITHREAD]

uhci0: LegSup = 0x2f00

usbus0 on uhci0

uhci1: <Intel 82801JI (ICH10) USB controller USB-E> port 0xaf40-0xaf5f irq 21 at device 26.1 on pci0

uhci1: [ITHREAD]

uhci1: LegSup = 0x2f00

usbus1 on uhci1

uhci2: <Intel 82801JI (ICH10) USB controller USB-F> port 0xaf20-0xaf3f irq 19 at device 26.2 on pci0

uhci2: [ITHREAD]

uhci2: LegSup = 0x2f00

usbus2 on uhci2

ehci0: <Intel 82801JI (ICH10) USB 2.0 controller USB-B> mem 0xfbeda000-0xfbeda3ff irq 18 at device 26.7 on pci0

ehci0: [ITHREAD]

usbus3: EHCI version 1.0

usbus3 on ehci0

uhci3: <Intel 82801JI (ICH10) USB controller USB-A> port 0xaf00-0xaf1f irq 23 at device 29.0 on pci0

uhci3: [ITHREAD]

uhci3: LegSup = 0x2f00

usbus4 on uhci3

uhci4: <Intel 82801JI (ICH10) USB controller USB-B> port 0xaec0-0xaedf irq 19 at device 29.1 on pci0

uhci4: [ITHREAD]

uhci4: LegSup = 0x2f00

usbus5 on uhci4

uhci5: <Intel 82801JI (ICH10) USB controller USB-C> port 0xaea0-0xaebf irq 18 at device 29.2 on pci0

uhci5: [ITHREAD]

uhci5: LegSup = 0x2f00

usbus6 on uhci5

ehci1: <Intel 82801JI (ICH10) USB 2.0 controller USB-A> mem 0xfbed8000-0xfbed83ff irq 23 at device 29.7 on pci0

ehci1: [ITHREAD]

usbus7: EHCI version 1.0

usbus7 on ehci1

pcib6: <ACPI PCI-PCI bridge> at device 30.0 on pci0

pci8: <ACPI PCI bus> on pcib6

vgapci0: <VGA-compatible display> mem 0xf9000000-0xf9ffffff,0xfaffc000-0xfaffffff,0xfb000000-0xfb7fffff irq 16 at device 4.0 on pci8

isab0: <PCI-ISA bridge> at device 31.0 on pci0

isa0: <ISA bus> on isab0

ahci0: <Intel ICH10 AHCI SATA controller> port 0xaff0-0xaff7,0xafac-0xafaf,0xafe0-0xafe7,0xafa8-0xafab,0xae80-0xae9f mem 0xfbed6000-0xfbed67ff irq 19 at device 31.2 on pci0

ahci0: [ITHREAD]

ahci0: AHCI v1.20 with 6 3Gbps ports, Port Multiplier not supported

ahcich0: <AHCI channel> at channel 0 on ahci0

ahcich0: [ITHREAD]

ahcich1: <AHCI channel> at channel 1 on ahci0

ahcich1: [ITHREAD]

ahcich2: <AHCI channel> at channel 2 on ahci0

ahcich2: [ITHREAD]

ahcich3: <AHCI channel> at channel 3 on ahci0

ahcich3: [ITHREAD]

ahcich4: <AHCI channel> at channel 4 on ahci0

ahcich4: [ITHREAD]

ahcich5: <AHCI channel> at channel 5 on ahci0

ahcich5: [ITHREAD]

ichsmb0: <Intel 82801JI (ICH10) SMBus controller> port 0x400-0x41f mem 0xfbed4000-0xfbed40ff irq 18 at device 31.3 on pci0

ichsmb0: [ITHREAD]

smbus0: <System Management Bus> on ichsmb0

smb0: <SMBus generic I/O> on smbus0

pcib7: <ACPI Host-PCI bridge> on acpi0

pci128: <ACPI PCI bus> on pcib7

pcib8: <PCI-PCI bridge> at device 0.0 on pci128

pci129: <PCI bus> on pcib8

pcib9: <ACPI PCI-PCI bridge> at device 1.0 on pci128

pci130: <ACPI PCI bus> on pcib9

pcib10: <ACPI PCI-PCI bridge> at device 3.0 on pci128

pci131: <ACPI PCI bus> on pcib10

pcib11: <PCI-PCI bridge> at device 0.0 on pci131

pci132: <PCI bus> on pcib11

mpt0: <LSILogic SAS/SATA Adapter> port 0xe800-0xe8ff mem 0xf7dec000-0xf7deffff,0xf7df0000-0xf7dfffff irq 48 at device 8.0 on pci132

mpt0: [ITHREAD]

mpt0: MPI Version=1.5.13.0

pcib12: <ACPI PCI-PCI bridge> at device 5.0 on pci128

pci133: <ACPI PCI bus> on pcib12

pcib13: <ACPI PCI-PCI bridge> at device 7.0 on pci128

pci134: <ACPI PCI bus> on pcib13

3ware device driver for 9000 series storage controllers, version: 3.80.06.003

twa0: <3ware 9000 series Storage Controller> port 0xfc00-0xfcff mem 0xf4000000-0xf5ffffff,0xf7fde000-0xf7fdefff irq 54 at device 0.0 on pci134

twa0: [ITHREAD]

twa0: INFO: (0x15: 0x1300): Controller details:: Model 9650SE-16ML, 16 ports, Firmware FE9X 4.10.00.027, BIOS BE9X 4.08.00.004

pcib14: <ACPI PCI-PCI bridge> at device 9.0 on pci128

pci135: <ACPI PCI bus> on pcib14

pci128: <base peripheral, interrupt controller> at device 20.0 (no driver attached)

pci128: <base peripheral, interrupt controller> at device 20.1 (no driver attached)

pci128: <base peripheral, interrupt controller> at device 20.2 (no driver attached)

pci128: <base peripheral, interrupt controller> at device 20.3 (no driver attached)

pci128: <base peripheral> at device 22.0 (no driver attached)

pci128: <base peripheral> at device 22.1 (no driver attached)

pci128: <base peripheral> at device 22.2 (no driver attached)

pci128: <base peripheral> at device 22.3 (no driver attached)

pci128: <base peripheral> at device 22.4 (no driver attached)

pci128: <base peripheral> at device 22.5 (no driver attached)

pci128: <base peripheral> at device 22.6 (no driver attached)

pci128: <base peripheral> at device 22.7 (no driver attached)

acpi_button0: <Power Button> on acpi0

ipmi0: <IPMI System Interface> port 0xca2-0xca3 on acpi0

ipmi0: KCS mode found at io 0xca2 on acpi

atrtc0: <AT realtime clock> port 0x70-0x71 irq 8 on acpi0

atkbdc0: <Keyboard controller (i8042)> port 0x60,0x64 irq 1 on acpi0

atkbd0: <AT Keyboard> irq 1 on atkbdc0

kbd0 at atkbd0

atkbd0: [GIANT-LOCKED]

atkbd0: [ITHREAD]

psm0: <PS/2 Mouse> irq 12 on atkbdc0

psm0: [GIANT-LOCKED]

psm0: [ITHREAD]

psm0: model IntelliMouse Explorer, device ID 4

uart0: <16550 or compatible> port 0x3f8-0x3ff irq 4 flags 0x10 on acpi0

uart0: [FILTER]

uart1: <16550 or compatible> port 0x2f8-0x2ff irq 3 on acpi0

uart1: [FILTER]

acpi_hpet0: <High Precision Event Timer> iomem 0xfed00000-0xfed003ff on acpi0

Timecounter "HPET" frequency 14318180 Hz quality 900

qpi0: <QPI system bus> on motherboard

pcib15: <QPI Host-PCI bridge> pcibus 255 on qpi0

pci255: <PCI bus> on pcib15

pcib16: <QPI Host-PCI bridge> pcibus 254 on qpi0

pci254: <PCI bus> on pcib16

ichwd0 on isa0

ichwd0: ICH WDT present but disabled in BIOS or hardware

device_attach: ichwd0 attach returned 6

ipmi1: <IPMI System Interface> on isa0

device_attach: ipmi1 attach returned 16

ichwd0 at port 0x830-0x837,0x860-0x87f on isa0

ichwd0: ICH WDT present but disabled in BIOS or hardware

device_attach: ichwd0 attach returned 6

ipmi1: <IPMI System Interface> on isa0

device_attach: ipmi1 attach returned 16

orm0: <ISA Option ROMs> at iomem 0xc0000-0xc7fff,0xd1000-0xd1fff,0xd2000-0xd3fff,0xd4000-0xd47ff on isa0

sc0: <System console> at flags 0x100 on isa0

sc0: VGA <16 virtual consoles, flags=0x300>

vga0: <Generic ISA VGA> at port 0x3c0-0x3df iomem 0xa0000-0xbffff on isa0

coretemp0: <CPU On-Die Thermal Sensors> on cpu0

est0: <Enhanced SpeedStep Frequency Control> on cpu0

p4tcc0: <CPU Frequency Thermal Control> on cpu0

coretemp1: <CPU On-Die Thermal Sensors> on cpu1

est1: <Enhanced SpeedStep Frequency Control> on cpu1

p4tcc1: <CPU Frequency Thermal Control> on cpu1

coretemp2: <CPU On-Die Thermal Sensors> on cpu2

est2: <Enhanced SpeedStep Frequency Control> on cpu2

p4tcc2: <CPU Frequency Thermal Control> on cpu2

coretemp3: <CPU On-Die Thermal Sensors> on cpu3

est3: <Enhanced SpeedStep Frequency Control> on cpu3

p4tcc3: <CPU Frequency Thermal Control> on cpu3

coretemp4: <CPU On-Die Thermal Sensors> on cpu4

est4: <Enhanced SpeedStep Frequency Control> on cpu4

p4tcc4: <CPU Frequency Thermal Control> on cpu4

coretemp5: <CPU On-Die Thermal Sensors> on cpu5

est5: <Enhanced SpeedStep Frequency Control> on cpu5

p4tcc5: <CPU Frequency Thermal Control> on cpu5

coretemp6: <CPU On-Die Thermal Sensors> on cpu6

est6: <Enhanced SpeedStep Frequency Control> on cpu6

p4tcc6: <CPU Frequency Thermal Control> on cpu6

coretemp7: <CPU On-Die Thermal Sensors> on cpu7

est7: <Enhanced SpeedStep Frequency Control> on cpu7

p4tcc7: <CPU Frequency Thermal Control> on cpu7

coretemp8: <CPU On-Die Thermal Sensors> on cpu8

est8: <Enhanced SpeedStep Frequency Control> on cpu8

p4tcc8: <CPU Frequency Thermal Control> on cpu8

coretemp9: <CPU On-Die Thermal Sensors> on cpu9

est9: <Enhanced SpeedStep Frequency Control> on cpu9

p4tcc9: <CPU Frequency Thermal Control> on cpu9

coretemp10: <CPU On-Die Thermal Sensors> on cpu10

est10: <Enhanced SpeedStep Frequency Control> on cpu10

p4tcc10: <CPU Frequency Thermal Control> on cpu10

coretemp11: <CPU On-Die Thermal Sensors> on cpu11

est11: <Enhanced SpeedStep Frequency Control> on cpu11

p4tcc11: <CPU Frequency Thermal Control> on cpu11

coretemp12: <CPU On-Die Thermal Sensors> on cpu12

est12: <Enhanced SpeedStep Frequency Control> on cpu12

p4tcc12: <CPU Frequency Thermal Control> on cpu12

coretemp13: <CPU On-Die Thermal Sensors> on cpu13

est13: <Enhanced SpeedStep Frequency Control> on cpu13

p4tcc13: <CPU Frequency Thermal Control> on cpu13

coretemp14: <CPU On-Die Thermal Sensors> on cpu14

est14: <Enhanced SpeedStep Frequency Control> on cpu14

p4tcc14: <CPU Frequency Thermal Control> on cpu14

coretemp15: <CPU On-Die Thermal Sensors> on cpu15

est15: <Enhanced SpeedStep Frequency Control> on cpu15

p4tcc15: <CPU Frequency Thermal Control> on cpu15

ZFS filesystem version: 5

ZFS storage pool version: features support (5000)

Timecounters tick every 1.000 msec

usbus0: 12Mbps Full Speed USB v1.0

usbus1: 12Mbps Full Speed USB v1.0

usbus2: 12Mbps Full Speed USB v1.0

usbus3: 480Mbps High Speed USB v2.0

usbus4: 12Mbps Full Speed USB v1.0

usbus5: 12Mbps Full Speed USB v1.0

usbus6: 12Mbps Full Speed USB v1.0

usbus7: 480Mbps High Speed USB v2.0

ugen0.1: <Intel> at usbus0

uhub0: <Intel UHCI root HUB, class 9/0, rev 1.00/1.00, addr 1> on usbus0

ugen1.1: <Intel> at usbus1

uhub1: <Intel UHCI root HUB, class 9/0, rev 1.00/1.00, addr 1> on usbus1

ugen2.1: <Intel> at usbus2

uhub2: <Intel UHCI root HUB, class 9/0, rev 1.00/1.00, addr 1> on usbus2

ugen3.1: <Intel> at usbus3

uhub3: <Intel EHCI root HUB, class 9/0, rev 2.00/1.00, addr 1> on usbus3

ugen4.1: <Intel> at usbus4

uhub4: <Intel UHCI root HUB, class 9/0, rev 1.00/1.00, addr 1> on usbus4

ugen5.1: <Intel> at usbus5

uhub5: <Intel UHCI root HUB, class 9/0, rev 1.00/1.00, addr 1> on usbus5

ugen6.1: <Intel> at usbus6

uhub6: <Intel UHCI root HUB, class 9/0, rev 1.00/1.00, addr 1> on usbus6

ugen7.1: <Intel> at usbus7

uhub7: <Intel EHCI root HUB, class 9/0, rev 2.00/1.00, addr 1> on usbus7

ipmi0: IPMI device rev. 1, firmware rev. 2.08, version 2.0

ipmi0: Number of channels 2

ipmi0: Attached watchdog

uhub0: 2 ports with 2 removable, self powered

uhub1: 2 ports with 2 removable, self powered

uhub2: 2 ports with 2 removable, self powered

uhub4: 2 ports with 2 removable, self powered

uhub5: 2 ports with 2 removable, self powered

uhub6: 2 ports with 2 removable, self powered

uhub3: 6 ports with 6 removable, self powered

uhub7: 6 ports with 6 removable, self powered

cd0 at ahcich1 bus 0 scbus2 target 0 lun 0

cd0: <Slimtype DVD A DL8ATS XP59> Removable CD-ROM SCSI-0 device

cd0: 150.000MB/s transfers (SATA 1.x, UDMA5, ATAPI 12bytes, PIO 8192bytes)

cd0: Attempt to query device size failed: NOT READY, Medium not present - tray open

sa0 at mpt0 bus 0 scbus7 target 0 lun 0

sa0: <IBM ULT3580-HH4 C7Q1> Removable Sequential Access SCSI-3 device

sa0: 300.000MB/s transfers

sa0: Command Queueing enabled

ch0 at mpt0 bus 0 scbus7 target 0 lun 1

ch0: <IBM 3573-TL B.60> Removable Changer SCSI-5 device

ch0: 300.000MB/s transfers

ch0: Command Queueing enabled

ch0: 44 slots, 1 drive, 1 picker, 3 portals

ada0 at ahcich0 bus 0 scbus1 target 0 lun 0

ada0: <WDC WD3200BEKT-60V5T1 12.01A12> ATA-8 SATA 2.x device

ada0: 300.000MB/s transfers (SATA 2.x, UDMA5, PIO 8192bytes)

ada0: Command Queueing enabled

ada0: 305245MB (625142448 512 byte sectors: 16H 63S/T 16383C)

ada1 at ahcich2 bus 0 scbus3 target 0 lun 0

ada1: <WDC WD3200BEKT-60V5T1 12.01A12> ATA-8 SATA 2.x device

ada1: 300.000MB/s transfers (SATA 2.x, UDMA5, PIO 8192bytes)

ada1: Command Queueing enabled

ada1: 305245MB (625142448 512 byte sectors: 16H 63S/T 16383C)

da0 at mps0 bus 0 scbus0 target 0 lun 0

da0: <LSI Logical Volume 3000> Fixed Direct Access SCSI-6 device

da0: 150.000MB/s transfers

da0: Command Queueing enabled

da0: 301360MB (617185280 512 byte sectors: 255H 63S/T 38418C)

da1 at twa0 bus 0 scbus8 target 0 lun 0

da1: <AMCC 9650SE-16M DISK 4.10> Fixed Direct Access SCSI-5 device

da1: 100.000MB/s transfers

da1: 1907338MB (3906228224 512 byte sectors: 255H 63S/T 243151C)

da2 at twa0 bus 0 scbus8 target 1 lun 0

da2: <AMCC 9650SE-16M DISK 4.10> Fixed Direct Access SCSI-5 device

da2: 100.000MB/s transfers

da2: 1907338MB (3906228224 512 byte sectors: 255H 63S/T 243151C)

da3 at twa0 bus 0 scbus8 target 2 lun 0

da3: <AMCC 9650SE-16M DISK 4.10> Fixed Direct Access SCSI-5 device

da3: 100.000MB/s transfers

da3: 1907338MB (3906228224 512 byte sectors: 255H 63S/T 243151C)

da4 at twa0 bus 0 scbus8 target 3 lun 0

da4: <AMCC 9650SE-16M DISK 4.10> Fixed Direct Access SCSI-5 device

da4: 100.000MB/s transfers

da4: 1907338MB (3906228224 512 byte sectors: 255H 63S/T 243151C)

da5 at twa0 bus 0 scbus8 target 4 lun 0

da5: <AMCC 9650SE-16M DISK 4.10> Fixed Direct Access SCSI-5 device

da5: 100.000MB/s transfers

da5: 1907338MB (3906228224 512 byte sectors: 255H 63S/T 243151C)

da6 at twa0 bus 0 scbus8 target 5 lun 0

da6: <AMCC 9650SE-16M DISK 4.10> Fixed Direct Access SCSI-5 device

da6: 100.000MB/s transfers

da6: 1907338MB (3906228224 512 byte sectors: 255H 63S/T 243151C)

da7 at twa0 bus 0 scbus8 target 6 lun 0

da7: <AMCC 9650SE-16M DISK 4.10> Fixed Direct Access SCSI-5 device

da7: 100.000MB/s transfers

da7: 1907338MB (3906228224 512 byte sectors: 255H 63S/T 243151C)

da8 at twa0 bus 0 scbus8 target 7 lun 0

da8: <AMCC 9650SE-16M DISK 4.10> Fixed Direct Access SCSI-5 device

da8: 100.000MB/s transfers

da8: 1907338MB (3906228224 512 byte sectors: 255H 63S/T 243151C)

da9 at twa0 bus 0 scbus8 target 8 lun 0

da9: <AMCC 9650SE-16M DISK 4.10> Fixed Direct Access SCSI-5 device

da9: 100.000MB/s transfers

da9: 1907338MB (3906228224 512 byte sectors: 255H 63S/T 243151C)

da10 at twa0 bus 0 scbus8 target 9 lun 0

da10: <AMCC 9650SE-16M DISK 4.10> Fixed Direct Access SCSI-5 device

da10: 100.000MB/s transfers

da10: 1907338MB (3906228224 512 byte sectors: 255H 63S/T 243151C)

da11 at twa0 bus 0 scbus8 target 10 lun 0

da11: <AMCC 9650SE-16M DISK 4.10> Fixed Direct Access SCSI-5 device

da11: 100.000MB/s transfers

da11: 1907338MB (3906228224 512 byte sectors: 255H 63S/T 243151C)

da12 at twa0 bus 0 scbus8 target 11 lun 0

da12: <AMCC 9650SE-16M DISK 4.10> Fixed Direct Access SCSI-5 device

da12: 100.000MB/s transfers

da12: 1907338MB (3906228224 512 byte sectors: 255H 63S/T 243151C)

da13 at twa0 bus 0 scbus8 target 12 lun 0

da13: <AMCC 9650SE-16M DISK 4.10> Fixed Direct Access SCSI-5 device

da13: 100.000MB/s transfers

da13: 1907338MB (3906228224 512 byte sectors: 255H 63S/T 243151C)

da14 at twa0 bus 0 scbus8 target 13 lun 0

da14: <AMCC 9650SE-16M DISK 4.10> Fixed Direct Access SCSI-5 device

da14: 100.000MB/s transfers

da14: 1907338MB (3906228224 512 byte sectors: 255H 63S/T 243151C)

da15 at twa0 bus 0 scbus8 target 14 lun 0

da15: <AMCC 9650SE-16M DISK 4.10> Fixed Direct Access SCSI-5 device

da15: 100.000MB/s transfers

da15: 1907338MB (3906228224 512 byte sectors: 255H 63S/T 243151C)

da16 at twa0 bus 0 scbus8 target 15 lun 0

da16: <AMCC 9650SE-16M DISK 4.10> Fixed Direct Access SCSI-5 device

da16: 100.000MB/s transfers

da16: 1907338MB (3906228224 512 byte sectors: 255H 63S/T 243151C)

SMP: AP CPU #1 Launched!

SMP: AP CPU #2 Launched!

SMP: AP CPU #3 Launched!

SMP: AP CPU #4 Launched!

SMP: AP CPU #5 Launched!

SMP: AP CPU #6 Launched!

SMP: AP CPU #7 Launched!

SMP: AP CPU #8 Launched!

SMP: AP CPU #9 Launched!

SMP: AP CPU #10 Launched!

SMP: AP CPU #11 Launched!

SMP: AP CPU #12 Launched!

SMP: AP CPU #13 Launched!

SMP: AP CPU #14 Launched!

SMP: AP CPU #15 Launched!

GEOM_MIRROR: Device mirror/gm0 launched (2/2).

Trying to mount root from ufs:/dev/mirror/gm0s1a

[0:1] rz1:~> df

Filesystem 1K-blocks Used Avail Capacity Mounted on

/dev/mirror/gm0s1a 8122126 550078 6922278 7% /

devfs 1 1 0 100% /dev

/dev/mirror/gm0s1d 132109852 174074 121366990 0% /var

/dev/mirror/gm0s1e 32494668 6 29895090 0% /var/crash

/dev/mirror/gm0s1f 32494668 4628180 25266916 15% /usr

/dev/mirror/gm0s1g 32494668 80 29895016 0% /tmp

/dev/mirror/gm0s1h 32494668 4 29895092 0% /spare

procfs 4 4 0 100% /proc

data 22955143746 13989950655 8965193090 61% /data

[0:2] rz1:~> zpool status

pool: data

state: ONLINE

scan: scrub repaired 0 in 7h23m with 0 errors on Thu Feb 7 04:44:32 2013

config:

NAME STATE READ WRITE CKSUM

data ONLINE 0 0 0

raidz1-0 ONLINE 0 0 0

label/twd0 ONLINE 0 0 0

label/twd1 ONLINE 0 0 0

label/twd2 ONLINE 0 0 0

label/twd3 ONLINE 0 0 0

label/twd4 ONLINE 0 0 0

raidz1-1 ONLINE 0 0 0

label/twd5 ONLINE 0 0 0

label/twd6 ONLINE 0 0 0

label/twd7 ONLINE 0 0 0

label/twd8 ONLINE 0 0 0

label/twd9 ONLINE 0 0 0

raidz1-2 ONLINE 0 0 0

label/twd10 ONLINE 0 0 0

label/twd11 ONLINE 0 0 0

label/twd12 ONLINE 0 0 0

label/twd13 ONLINE 0 0 0

label/twd14 ONLINE 0 0 0

logs

label/ssd0 ONLINE 0 0 0

spares

label/twd15 AVAIL

errors: No known data errors

[0:3] rz1:~> zpool list

NAME SIZE ALLOC FREE CAP DEDUP HEALTH ALTROOT

data 27.2T 16.3T 10.9T 59% 1.00x ONLINE -

zpool maintenance performance is quite good - a scrub of the whole 16+TB of

data runs at slightly under 700MB/second:

[0:4] rz1:~> zpool status

pool: data

state: ONLINE

scan: scrub in progress since Thu Feb 7 18:43:17 2013

15.4T scanned out of 16.4T at 673M/s, 0h25m to go

0 repaired, 94.04% done

One thing that will completely kill scrub performance under FreeBSD

is enabling ZFS deduplication. I don't know why this is, as the RAIDzillas

have lots of free memory and the disks in the pool only report about a 10%

I/O utilization (compared with 90%+ when performing a scrub when deduplication

isn't configured). Instead of completing in 6 to 7 hours, it is more like 6 to

7 days. I discussed the issue with a number of FreeBSD developers and tried the

various suggestions that they made, but nothing increased the scrub performance

on pools with deduplication enabled. Fortunately (?), the data on my RAIDzillas

doesn't lend itself to deduplication, providing only about a 1.02x (2%) gain

compared with not doing deduplication, so I was able to rebuild the pools

without deduplication to get my performance back.

Here is a Windows 7 view showing (among other systems) a pair of RAIDzilla II's (Y: and Z:) with 21TB storage on each. Please note that all of these systems are firewalled from the Internet and don't bother trying to come visit. It will only annoy me and cause me to make unhappy noises at your ISP.

![[Windows view of two RAIDzilla II's]](windows-explorer.jpg)

![[Sample iozone graph showing 500MB/sec writes]](rz2-iozone.jpg)